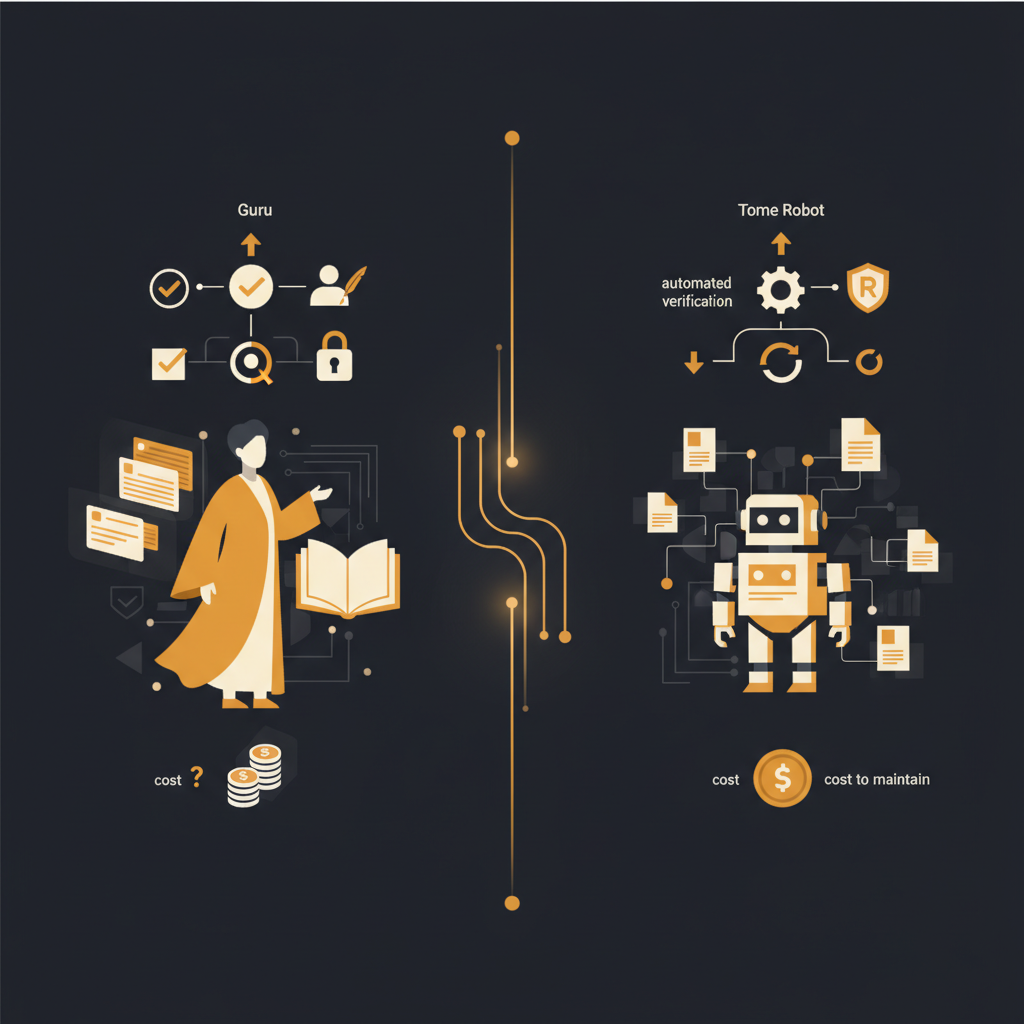

Guru vs. Tome Robot for internal knowledge bases

Guru and similar knowledge bases rely on busy subject matter experts to manually write and verify content. This approach often leads to outdated information and significant maintenance overhead, a challenge automated platforms aim to address.

Internal knowledge bases are indispensable tools for scaling operations, streamlining customer support, and onboarding new employees efficiently. Yet, the persistent challenge for many organizations is not merely implementing such a system, but ensuring its content remains accurate, current, and genuinely useful without becoming a perpetual drain on resources. This dilemma highlights a fundamental divergence in philosophy between platforms like Guru, which presupposes human content creation, and emerging solutions designed to minimize this manual burden.

The Foundational Assumption: Manual Content Creation vs. Automated Capture

Guru's strength lies in its ability to centralize expert knowledge. Its model is predicated on the idea that subject matter experts (SMEs) within an organization will actively contribute by writing "cards" – concise pieces of information that answer specific questions or explain processes. This approach is effective when SMEs have both the time and the inclination to document their expertise diligently. The reality, however, often diverges from this ideal.

For senior operations, customer success, support, and engineering leaders, the expectation that their most valuable team members will consistently carve out significant time for documentation is frequently unrealistic. SMEs are typically engaged in high-value, primary tasks. Writing and maintaining documentation, while critical, often becomes a secondary, deprioritized activity. This leads to a persistent backlog of undocumented knowledge, tribal knowledge trapped in individuals' heads, and an immediate content deficit upon the system's launch.

In contrast, other platforms operate on the premise that humans won't consistently write documentation. Instead, they focus on capturing knowledge passively or with minimal effort during the course of regular work. This often involves mechanisms like recording real team walkthroughs, where every click and interaction is transformed into a searchable, narrated, screenshot-rich article. This shift fundamentally alters the initial content creation burden, moving from a proactive writing task to a reactive, often automated, capture process.

Verification Workflows: Human Bottleneck or System Alert?

A key feature of Guru is its explicit verification workflow. Cards are assigned to SMEs, who are responsible for reviewing and verifying their accuracy within a specified timeframe. This system is designed to instill confidence in the information, signifying that a designated expert has attested to its correctness. For leaders concerned with information governance, this human stamp of approval can seem appealing.

However, the efficacy of this verification process is often hampered by the same constraints that limit initial content creation. SMEs are busy. Verification deadlines can be missed, reviews can be perfunctory, or the designated verifier may leave the organization, leaving cards orphaned and unverified. A "verified" badge on a card does not inherently mean the information is up-to-date; it only means it was reviewed by a human at a certain point in time. If the underlying process or UI changes even subtly, a verified card can become misleading or outright incorrect, yet retain its trusted status until the next (often overdue) verification cycle.

Solutions that prioritize automation approach verification from a different angle. Rather than relying on humans to periodically review static text, these systems can actively monitor the environments they document. For example, by detecting when the underlying user interface (UI) of an application has changed, such platforms can automatically flag articles that need updates. This shifts the burden from a manual, scheduled review to a system-driven, event-triggered alert. The emphasis moves from "has an expert reviewed this text?" to "does this documentation accurately reflect the current state of the system?" This distinction is crucial for maintaining operational accuracy at scale.

Trust Signals and Content Staleness: A Question of Pragmatism

The perceived reliability of a knowledge base hinges on its trust signals. Guru's primary trust signal is the human verifier's name and the verification date. This offers a clear audit trail of who last vouched for the information. While this provides a sense of accountability, it presents a pragmatic challenge: a human's verification, however diligent, is a snapshot in time. As processes evolve and software UIs are updated (often weekly or daily in modern SaaS environments), this human-verified content can quickly become stale, leading to decreased trust and operational errors.

Consider a scenario where a critical business process involves ten steps in a particular application. An SME meticulously documents these steps in a Guru card and verifies it. A week later, the application vendor updates the UI, moving a button, renaming a field, or even altering a step. The Guru card, still "verified" by the SME, now provides incorrect guidance. Users attempting to follow it will become frustrated, make mistakes, or escalate to support, negating the very purpose of the knowledge base. The human verification, in this context, offers a false sense of security.

Automated knowledge platforms, by detecting UI changes and flagging articles accordingly, offer a different kind of trust signal: one rooted in real-time system state. An article that is automatically flagged because its corresponding UI element has changed provides an immediate, unambiguous signal that the content requires attention. This pragmatic approach values the immediate relevance of the information over a static human endorsement. It acknowledges that in dynamic environments, the most trustworthy content is that which directly reflects the current operational reality, rather than a past human review.

The True Cost of Maintenance: Beyond the Subscription Fee

When evaluating knowledge base platforms, the sticker price of the software is only one component of the total cost of ownership. The most significant, yet often overlooked, expense lies in the ongoing labor required to populate and maintain the content. This is where the foundational assumptions of Guru versus automated solutions manifest most clearly.

For Guru, the cost of content maintenance is substantial. It includes the time SMEs spend writing new cards, updating existing ones, and performing verification cycles. Quantifying this can be illuminating: if an organization has 15 SMEs, each dedicating just two hours per week to Guru-related tasks (writing, reviewing, verifying), that amounts to 30 hours of high-value labor weekly. At a conservative fully loaded cost of $100 per hour for senior personnel, this translates to $3,000 per week, or approximately $156,000 annually. This figure is purely for content maintenance labor, in addition to the software subscription fees. This represents a significant opportunity cost; those 30 hours could otherwise be spent on core responsibilities, innovation, or direct customer interaction.

In contrast, platforms built on automated capture significantly reduce this labor overhead. While there is still an initial effort to capture workflows (often during existing operational tasks, effectively piggybacking on work already being done), the ongoing maintenance burden is dramatically lower. The system flags changes, prompting a focused update rather than requiring continuous, manual review of potentially hundreds or thousands of cards. This shifts the investment from constant content creation and re-verification to managing exceptions flagged by the system, thereby reducing the overall human capital expenditure for knowledge base upkeep.

The choice between knowledge base platforms ultimately boils down to an organization's willingness to invest significant, ongoing human capital in content creation and verification versus its preference for systems that minimize this burden through automation. While Guru excels at centralizing human-curated knowledge, solutions that inherently assume humans will not consistently write documentation, by focusing on automated capture and UI change detection, offer a compelling alternative for maintaining evergreen, trustworthy operational guides in fast-evolving digital environments. Tome Robot, for instance, focuses on this automated approach, aiming to keep your knowledge base current with minimal human intervention.

Stop writing docs nobody reads.

Record them instead.

Install the extension, walk through the tool you're tired of explaining. Tome Robot does the rest.